Can ChatGPT write an academic paper?

Adrien Foucart, PhD in biomedical engineering.

This website is guaranteed 100% human-written, ad-free and tracker-free. Follow updates using the RSS Feed or by following me on Mastodon

Adrien Foucart, PhD in biomedical engineering.

This website is guaranteed 100% human-written, ad-free and tracker-free. Follow updates using the RSS Feed or by following me on Mastodon

Mashrin Srivastava tried an interesting ChatGPT experiment: a “paper completely written by ChatGPT” (originally published on LinkedIn, now available as a preprint on ResearchGate).

He appended to the generated paper the whole sequence of prompts and responses that was used to generate it. The original prompt was for an article to submit to a workshop on Sparsity in Neural Networks at ICLR 2023.

I don’t think it makes a lot of sense to review the resulting paper like I would do for a regular paper (I can’t pretend that I don’t know it’s a generated text), but I think the exercise is really interesting, so I want to take a deep dive into it.

A few disclaimers before I start:

Okay, let’s get started !

I started from the Appendix, so I could follow along the generation of the paper to better get a sense of where the ideas came from and how much “prompting” it actually needed. It’s really great from Mashrin to include all of it, as it makes the analysis way more complete. Thanks!

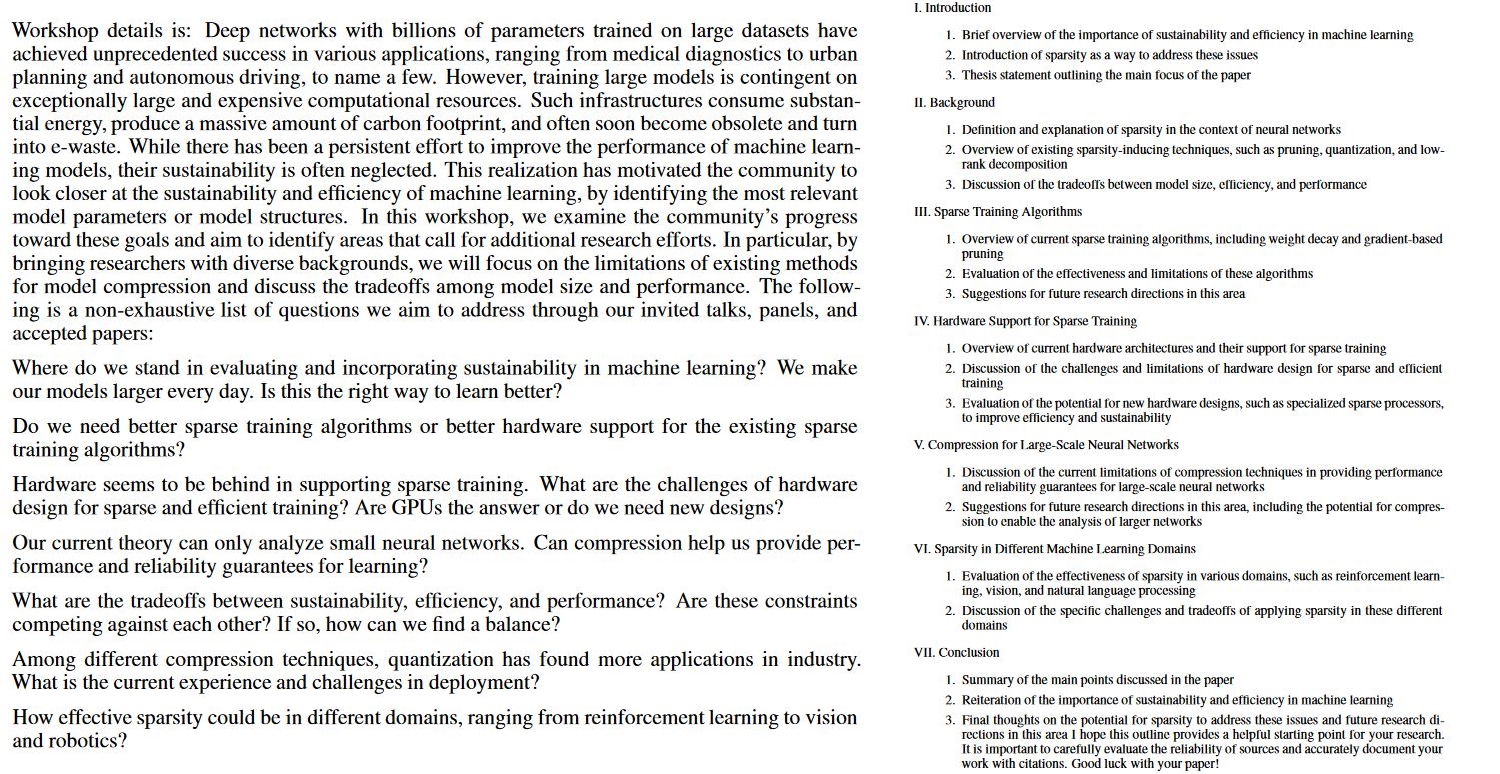

The first prompt asked for a paper idea for the workshop, giving to ChatGPT the workshop description.

Here, ChatGPT mostly takes point by point all the proposed topics from the workshop description, and arranges them into sections. This is fairly typical from what I’ve seen of ChatGPT: it tends to really want to include everything you mention in its answers.

The result here is that the outline proposes a wide but superficial survey paper, which would not really be fitting for a workshop. The structure reads more like the chapters of a book than the sections of a conference paper.

So: not a great start, but nothing particularly bad right now.

Large portions of the introduction are again directly taken from the workshop description. It then rewrites the paper outline, which itself also came from the prompt. Having the outline explained in the intro is normal, but this means that right now we still don’t have any added value from ChatGPT: if we want to know what are interesting topics related to sparsity, we can read the workshop description and we’ll have as much info as with the current intro.

Also, at this point we have zero citations, including for assertions of facts such as:

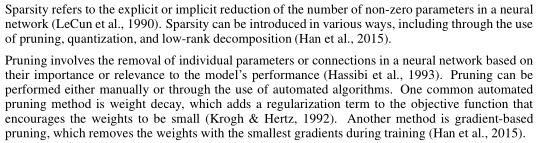

The first problem here – caught by Mashrin – is that there is still zero citations, which becomes very problematic in what is typically a citation-heavy part of a paper.

The provided definitions are then very surface-level, and arguably wrong: for instance, “weight decay” is not by itself a pruning technique. It’s a regularization technique which can be used in conjunction with a pruning method which removes connections with weights that are close to zero.

In general, the level of the explanations at this point could be acceptable for a student assignment (assuming it’s correct, I don’t see many obvious errors but there could be), not quite for an actual research paper.

Mashrin then asks ChatGPT to provide citations and to add more details “for a research paper.” We’re moving away a bit from the “completely generated by ChatGPT” scenario, but that’s a bit nitpicky so let’s keep going.

The response doesn’t have more details, but did add citations and a reference list, so let’s go through that.

The first reference is “Sparsity refers to the explicit or implicit reduction of the number of non-zero parameters in a neural network (LeCun et al., 1990).” LeCun et al. is not in the reference list (which I think just got truncated due to limits on the output size). Much later in the process, Mashrin asks ChatGPT to provide a BibTeX citation for it, to which we get a reply mentioning a paper called “Optimal Brain Damage” by LeCun, Denker, Solla, Howard and Jackel. This is not exactly correct, as that paper is from 1989 and with only LeCun, Denker, and Solla. While the paper does talk about pruning and reducing the size of a network, it does not provide such a definition of sparsity.

So let’s check some other references:

Let’s stop here for a moment: the citations are generally “on topic,” but they are clearly not the source of the information.

Credit where it’s due, however: ChatGPT shows itself here to be a decent source of papers to look at to actually get to know the topic… at least from a fairly general perspective (up to now at least this is all very superficial).

Still looking at the citations, here our first is to Krogh and Hertz, 1992, but in the references the title corresponds to another paper from the same authors (from 1991). Again, the citation is “on topic,” but does not explicitly provide the assertion cited.

We also here see repetitions from things already written in the previous chapter. This section barely brings any new details or new pieces of information to the table. The algorithms provided are:

Then it does some improv on the limitations of those two “methods” (with again van der Maaten cited for no reason).

Mashrin prompts it again for “more sparsity algorithms.” We get:

Mashrin asks for more again, and we get “Dynamic sparsity” (the text doesn’t really correspond to what the paper describes); “Structural sparsity,” which cites a paper by Gao et al. that doesn’t exist, and provides an incorrect definition; and “Functional sparsity,” which cites an article with the wrong authors and year (and which doesn’t correspond to the explanation, which is kind of nonsensical as “constraining the activation to follow a specific function” doesn’t mean anything: that’s just what activation functions do in general).

So, to summarize: rehashing things that superficially develop what was in the prompt, with “algorithms” that and are not particularly well explained, are often badly attributed, are badly named so that it’s hard to find more about them, or just don’t exist.

I’m not going to be as detailed for the rest, as it would get very repetitive very quickly, but in the next section on Hardware the first citation is already wrongly attributed, and we keep the same pattern: superficial explanations that “sound right” but are often imprecise, incomplete or just wrong, with citations that don’t match (when they exist).

In the “compression” section, we see the same techniques that were already presented before (pruning, quantization), so no new information.

At some point, Mashrin asks for a novel idea for future research in the area of compression for large-scale neural networks. This moves again away from the “all-written-by-ChatGPT” concept, but it’s interesting. Lack of novelty is often seen as one of the main limitations of LLMs. So what can ChatGPT come up with?

Well, we see a reformulation of ideas that were already presented before (“adaptively adjust the level of sparsity in the network based on the specific characteristics of the input data,” which nearly matches what Dynamic Sparsity was presented as). The proposed method also doesn’t really make sense. “Jointly optimizes the network weights and the sparsity pattern of the network based on the input data?” … yes? The “sparsity pattern” is directly linked to the “network weights” (connections that can be pruned are connections with weights close to zero), and the training is obviously based on the training data, so what does that even mean? The rest of the explanation similarly makes no sense (but it “reads” nicely!)

ChatGPT is prompted for more novel ideas.

We get… really rewording of the same, or of previously explained concepts, or so vague as to be completely unusable.

Anyway, I think we get the point. The conclusion is also mostly empty of actual content. It’s basically all “sparsity would be more efficient, so it’s nice, but there are tradeoffs, and it’s difficult.”

Finally, Mashrin asks for an abstract and bibtex citations of the references. The references, as we’ve seen, are mostly accurate, but sometimes made up, which is exactly what you want from an academic paper!

So all of these results from the prompts were then compiled into a full paper. At least one citation has been changed in the full paper from the prompts results (LeCun, 1990 has become Liu, 2015, I haven’t gone throuhg all), but otherwise it’s just some reformatting.

What can we make of all of this? Here are my main thoughts:

That’s really important to note again, I think, because it shows a key problem with LLMs – they don’t have, nor understand, intent. Well, they don’t understand anything, but let’s move on from that.

The original prompt was to “suggest a paper for the below conference workshop on Sparsity in Neural Networks.” This is not the kind of venue where you try to write a global review of the whole domain discussed in the workshop. Such workshop are typically for really novel – often work-in-progress – ideas that can move the field forward in one or a few of the specific topics ([just look at the list of paper from the 2022 edition). Everything that is in this ChatGPT paper would (hopefully) be considered basic, common knowledge for attendees of such a workshop.

I mean, the parts that are correct.

Some people seem to get really upset when they are reminded that LLMs are “stochastic parrots,” but this paper is a great example of how true that is.

It’s really just a word generator.

The structure of the “proposed paper” is taken directly from the prompt. For all the “detailed” section, it’s a lot of superficial, sometimes nonsensical paragraphs that are “on topic” but don’t bring anything new to the table.

It doesn’t even really work as a “quick review,” which would be fine for personal usage if not for publication, because too much of it is just empty of content or wrong. The citations are sometimes relevant, but most of it is way too old to be really good starting points to get the state-of-the-art.

From what I can see here: no. I can’t say exactly how I would feel seeing this as a reviewer without knowing that it was a ChatGPT-generated text, or without going through the prompt, but it would certainly be a hard reject from me.

Besides the fact that it’s not appropriate for the workshop, there are too many obvious red flags. The superficiality, the repetitions, the citations that don’t match the text… These are all things that I notice when I review papers or grade student work, and there are so many of them here that there is no chance that I would let it pass.

I’m not enough of a specialist in questions of sparsity techniques and efficiency in neural networks, so I can’t really judge how wrong it is… but that’s even more of a red flag: even as a non-specialist, it’s very obviously wrong in many places.

If it’s a topic you don’t actually care about and you just want to have a superficial level of knowledge to get the bare minimum to pass a class, sure.

Otherwise I would avoid.

I was skeptical before reading the paper, but I tried to keep an open mind (believe it or not!).

I must say I’m absolutely impressed by the quality of the text. It reads like a scientific paper. I totally understand why many people see this as amazing.

But in the end… Stochastic parrot remains the best description there is. As soon as you go beyond the superficial level of “reading the text,” and you try to parse its meaning and verify what it says, it breaks down into noise.